MiniMax M2.7's Writing Prowess is Underestimated: A Practical Guide for Content Creators

Author

Leah

Date Published

TL; DR Key Takeaways

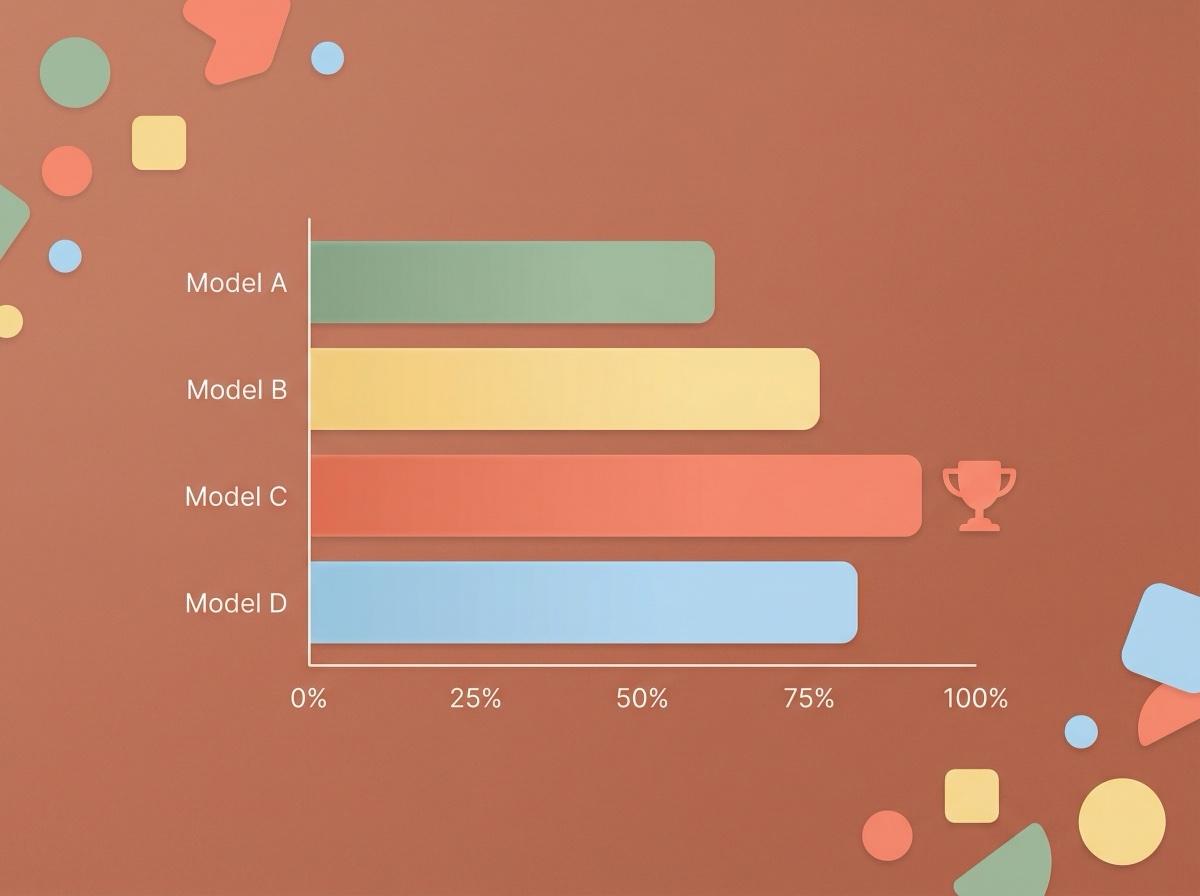

- MiniMax M2.7 achieved an average score of 91.7 in text creation evaluations, surpassing GPT-5.4 (90.2) and Claude Opus 4.6 (88.5). It is currently a writing model significantly undervalued by comprehensive leaderboards.

- M2.7's API is priced at only $0.30 / million input tokens—less than one-tenth the cost of Opus. Content creators can achieve top-tier text output quality on an extremely low budget.

- M2.7 excels in three major text scenarios: polishing, summarization, and translation. However, it has shortcomings in complex reasoning and multi-scenario persona consistency, making it best suited for use alongside other models.

An Overlooked Fact: M2.7 Ranks First in Writing Ability

You may have already seen many reports about MiniMax M2.7. Almost all articles discuss its programming capabilities, Agent self-evolution mechanism, and its 56.22% SWE-Pro score. But few mention a key set of data: In an independent text creation evaluation on Zhihu covering polishing, summarization, and translation, M2.7 ranked first with an average score of 91.7, surpassing GPT-5.4 (90.2), Claude Opus 4.6 (88.5), and Kimi K2.5 (88.6) 1.

What does this mean? If you are a blogger, Newsletter author, social media manager, or video scriptwriter, M2.7 might be the most cost-effective AI writing tool currently available—yet you’ve likely heard almost no one recommend it.

From a content creator's perspective, this article will analyze the true writing capabilities of MiniMax M2.7, telling you what it’s good at, what it’s not, and how to integrate it into your daily creative workflow.

Just How Strong is MiniMax M2.7's Writing Ability?

Let’s look at the hard data first. According to the Zhihu in-depth evaluation report, M2.7's performance in the text creation fair use case set exhibits an interesting "ranking inversion" phenomenon: its overall ranking is only 11th, but it ranks 1st in the text creation sub-category. What drags down the overall score are the reasoning and logic dimensions, not the text capability itself 1.

Specifically, here is its performance in three core writing scenarios:

Polishing Ability: M2.7 can accurately identify the tone and style of the original text, optimizing expression while maintaining the author's voice. This is crucial for bloggers who need to edit large volumes of drafts. In actual tests, its polishing output consistently ranked the highest among all models.

Summarization Ability: When faced with long research reports or industry documents, M2.7 can extract core arguments and generate clearly structured summaries. Official MiniMax data shows that M2.7 achieved an ELO score of 1495 in the GDPval-AA evaluation, the highest among domestic models, meaning it possesses top-tier standards in understanding and processing professional documents 2.

Translation Ability: For creators who need to produce bilingual Chinese-English content, M2.7's translation quality is also leading in evaluations. Its understanding of Chinese is particularly outstanding, with a token-to-Chinese character conversion ratio of approximately 1000 tokens to 1600 Chinese characters, making it more efficient than most overseas models 3.

Notably, M2.7 achieved this level with only 10 billion active parameters. In comparison, Claude Opus 4.6 and GPT-5.4 have much larger parameter scales. A report by VentureBeat pointed out that M2.7 is currently the smallest model in the Tier-1 performance category 4.

Why Should Content Creators Care About This "Programming Model"?

When M2.7 was released, it was positioned as the "first AI model to deeply participate in its own iteration," highlighting Agent capabilities and software engineering. This led most content creators to ignore it directly. However, looking closely at MiniMax's official introduction reveals an easily overlooked detail: M2.7 has been systematically optimized for office scenarios, capable of handling the generation and multi-round editing of documents like Word, Excel, and Slides 2.

An evaluation article by ifanr used a precise critique: "After testing it, what really caught our attention about MiniMax M2.7 wasn't that it achieved a 66.6% medal rate in Kaggle competitions, nor that it delivered the Office suite cleanly." What was truly impressive was the initiative and depth of understanding it displayed in complex tasks 5.

For content creators, this "initiative" manifests in several ways. When you give M2.7 a vague writing requirement, it doesn't mechanically execute instructions; instead, it actively seeks solutions, iterates on old outputs, and provides detailed explanations. Reddit users in the r/LocalLLaMA evaluation also observed similar traits: M2.7 reads a significant amount of context before starting to write, analyzing dependencies and call chains 6.

There is also a practical factor: cost. M2.7's API is priced at $0.30 per million input tokens and $1.20 per million output tokens. According to data from Artificial Analysis, its blended price is approximately $0.53 / million tokens 7. In contrast, the cost of Claude Opus 4.6 is 10 to 20 times higher. For creators who need to generate large amounts of content daily, this price gap means you can run over 10 times more tasks with the same budget.

A Practical Guide to M2.7 for Content Creators

Now that you understand M2.7's writing prowess, the key question is: how do you use it? Here are three proven, high-efficiency usage scenarios.

Scenario 1: Long-form Research and Summary Generation

Suppose you are writing an in-depth article about an industry trend and need to digest more than 10 reference materials. The traditional approach is to read each one and manually extract key points. With M2.7, you can feed the materials to it, have it generate a structured summary, and then expand your writing based on that summary. M2.7 performed excellently in search evaluations like BrowseComp, indicating its information retrieval and integration capabilities have been specifically trained.

In YouMind, you can save research materials such as webpages, PDFs, and videos directly to a Board (knowledge space), then call upon AI to ask questions and summarize these materials. YouMind supports multiple models, including MiniMax, allowing you to complete the entire workflow from material collection to content generation in one workspace without switching back and forth between platforms.

Scenario 2: Multilingual Content Rewriting

If you operate content for an international audience, M2.7's Chinese and English processing capability is a practical advantage. You can write a first draft in Chinese and then have M2.7 translate and polish it into an English version, or vice versa. Because its Chinese token efficiency is high (1000 tokens ≈ 1600 Chinese characters), the cost of processing Chinese content is lower than using overseas models.

Scenario 3: Batch Content Production

Social media managers often need to break down a long article into multiple tweets, Xiaohongshu notes, or short video scripts. M2.7's 97% Skill Adherence rate means it can strictly follow the format and style requirements you set 2. You can create different prompt templates for different platforms, and M2.7 will execute them faithfully without deviating from instructions.

It is worth noting that M2.7 is not without its weaknesses. The Zhihu evaluation showed that it scored only 81.7 in the "multi-scenario persona consistency writing" use case, with significant disagreement among reviewers 1. This means if you need the model to maintain a stable persona over long conversations (such as simulating a specific brand's tone), M2.7 might not be the best choice. Additionally, Reddit users reported a median task duration of 355 seconds, which is slower than previous versions 6. For scenarios requiring rapid iteration, you may need to use it alongside other faster models.

In YouMind, using multiple models together is very convenient. The platform simultaneously supports multiple models like GPT, Claude, Gemini, Kimi, and MiniMax. You can flexibly switch based on the needs of different tasks—using M2.7 for text polishing and summarization, and other models for tasks requiring strong reasoning.

Comparison of M2.7 with Other AI Writing Tools

Tool | Best Use Case | Free Version | Core Advantage |

|---|---|---|---|

One-stop research + content generation | ✅ | Multi-model switching, Board knowledge management, full loop from materials to creation | |

Direct M2.7 API calls | ✅ | Native API experience, Coding Plan subscription | |

Long document understanding and dialogue | ✅ | Ultra-long context window | |

General Chinese writing | ✅ | Alibaba ecosystem integration, multimodal |

It should be noted that YouMind's core value does not lie in replacing any single model, but in providing a creative environment that integrates multiple models. You can save all research materials in a YouMind Board, conduct deep Q&A with AI, and then generate content directly in the Craft editor. This closed-loop workflow of "learning, thinking, and creating" is something that cannot be achieved by using any single model API alone. Of course, if you only need pure API calls, the MiniMax official platform or third-party services like OpenRouter are also good choices.

FAQ

Q: What type of content is MiniMax M2.7 suitable for writing?

A: M2.7 performs strongest in polishing, summarization, and translation, ranking first with an average evaluation score of 91.7. It is particularly suitable for long blog posts, research report summaries, bilingual Chinese-English content, and social media copy. It is less suitable for scenarios requiring a fixed persona over long periods, such as brand virtual assistant dialogues.

Q: Is MiniMax M2.7's writing ability really stronger than GPT-5.4 and Claude Opus 4.6?

A: In the Zhihu independent evaluation's text creation fair use case set, M2.7's average score of 91.7 was indeed higher than GPT-5.4 (90.2) and Opus 4.6 (88.5). However, note that this is a score for the text generation sub-category; M2.7's overall ranking (including reasoning, logic, etc.) was only 11th. It is a typical "strong text, weak reasoning" model.

Q: How much does it cost to write a 3000-character Chinese article with MiniMax M2.7?

A: Based on the ratio of 1000 tokens ≈ 1600 Chinese characters, 3000 characters consume about 1875 input tokens and a similar number of output tokens. With M2.7's API pricing ($0.30 / million input + $1.20 / million output), the cost per article is less than $0.01, which is almost negligible. Even including token consumption for prompts and context, the cost of an article is unlikely to exceed $0.05.

Q: How does M2.7 compare to other domestic AI writing tools like Kimi and Tongyi Qianwen?

A: Each has its own focus. M2.7 leads in text generation quality in evaluations and has extremely low costs, making it suitable for batch content production. Kimi's advantage lies in ultra-long context understanding, suitable for processing long documents. Tongyi Qianwen is deeply integrated with the Alibaba ecosystem and is suitable for scenarios requiring multimodal capabilities. It is recommended to choose based on specific needs or use a multi-model platform like YouMind to switch flexibly.

Q: Where can I use MiniMax M2.7?

A: You can call it directly through the MiniMax official API platform or access it via third-party services like OpenRouter. If you don't want to deal with API configurations, creative platforms like YouMind that integrate multiple models allow you to use it directly in the interface without writing code.

Summary

MiniMax M2.7 is the domestic large model most worth the attention of content creators in March 2026. Its text creation capability is significantly undervalued by comprehensive leaderboards: its average evaluation score of 91.7 surpasses all mainstream models, while its API cost is only one-tenth that of top competitors.

Three core points are worth remembering: First, M2.7 performs at a top-tier level in polishing, summarization, and translation scenarios, making it suitable as a primary model for daily writing; second, its weaknesses lie in reasoning and persona consistency, so complex logical tasks should be paired with other models; third, the pricing of $0.30 / million input tokens makes batch content production extremely economical.

If you want to use M2.7 alongside other mainstream models on a single platform to complete the entire process from material collection to content publishing, you can try YouMind for free. Save your research materials to a Board, let AI help you organize and generate content, and experience a one-stop workflow of "learning, thinking, and creating."

References

[1] MiniMax-M2.7 In-depth Evaluation Report

[2] MiniMax M2.7: Early Echoes of Self-Evolution

[3] MiniMax API Pricing Documentation

[4] Report on the Release of MiniMax M2.7 Self-Evolving AI Model (VentureBeat)

[5] Testing MiniMax M2.7: When AI Gets Serious, It Even Outcompetes Itself (ifanr)

[6] MiniMax M2.7 Independent Benchmark Results (Reddit r/LocalLLaMA)

[7] MiniMax-M2.7 Performance and Price Analysis (Artificial Analysis)