Why Do AI Agents Always Forget Things? A Deep Dive into the MemOS Memory System

Author

Jared Liu

Date Published

TL; DR Key Takeaways

- Current AI Agents face severe "memory loss" issues in long conversations, with 65% of enterprise AI failures directly related to context drift.

- MemOS extracts memory from the Prompt into a system-level independent component, reducing actual Token consumption by approximately 61% and improving temporal reasoning accuracy by 159%.

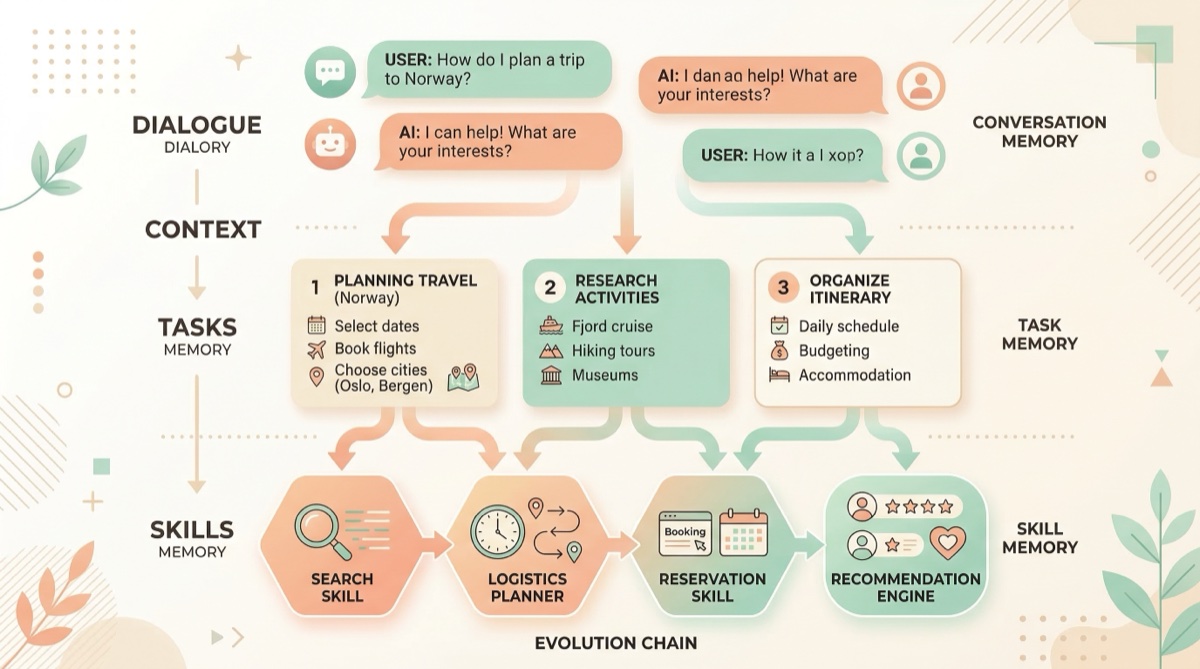

- The most core differentiation of MemOS lies in its "conversation → Task → Skill" memory evolution chain, enabling Agents to truly reuse experience.

- This article provides a horizontal comparison of four major Agent memory solutions: MemOS, Mem0, Zep, and Letta, to help developers quickly choose the right one.

Is Your AI Agent Also Repeatedly Asking the Same Question?

You've probably encountered this scenario: you spend half an hour teaching an AI Agent about a project's background, only to start a new session the next day, and it asks you from scratch, "What is your project about?" Or, even worse, a complex multi-step task is halfway through, and the Agent suddenly "forgets" the steps already completed, starting to repeat operations.

This is not an isolated case. According to Zylos Research's 2025 report, nearly 65% of enterprise AI application failures can be attributed to context drift or memory loss 1. The root of the problem is that most current Agent frameworks still rely on the Context Window to maintain state. The longer the session, the greater the Token overhead, and critical information gets buried in lengthy conversation histories.

This article is suitable for developers building AI Agents, engineers using frameworks like LangChain / CrewAI, and all technical professionals who have been shocked by Token bills. We will deeply analyze how the open-source project MemOS solves this problem with a "memory operating system" approach, and provide a horizontal comparison of mainstream memory solutions to help you make technology selection decisions.

Why Is Long-Term Memory So Difficult for AI Agents?

To understand what problem MemOS is solving, we first need to understand where the AI Agent's memory dilemma truly lies.

Context Window does not equal memory. Many people think that Gemini's 1M Token window or Claude's 200K window is "enough," but window size and memory capacity are two different things. A study by JetBrains Research at the end of 2025 clearly pointed out that as context length increases, LLMs' efficiency in utilizing information significantly decreases 2. Stuffing the entire conversation history into the Prompt not only makes it difficult for the Agent to find critical information but also causes the "Lost in the Middle" phenomenon, where content in the middle of the context is recalled the worst.

Token costs expand exponentially. A typical customer service Agent consumes approximately 3,500 Tokens per interaction 3. If the full conversation history and knowledge base context need to be reloaded every time, an application with 10,000 daily active users can easily exceed five figures in monthly Token costs. This doesn't even account for the additional consumption from multi-turn reasoning and tool calls.

Experience cannot be accumulated and reused. This is the most easily overlooked problem. If an Agent helps a user solve a complex data cleaning task today, it won't "remember" the solution next time it encounters a similar problem. Every interaction is a one-off, making it impossible to form reusable experience. As an analysis by Tencent News stated: "An Agent without memory is just an advanced chatbot" 4.

These three problems combined constitute the most intractable infrastructure bottleneck in current Agent development.

MemOS's Solution: Turning Memory into an Operating System

MemOS was developed by the Chinese startup MemTensor. It first released the Memory³ hierarchical large model at the World Artificial Intelligence Conference (WAIC) in July 2024, and officially open-sourced MemOS 1.0 in July 2025. It has now iterated to v2.0 "Stardust." The project uses the Apache 2.0 open-source license and is continuously active on GitHub.

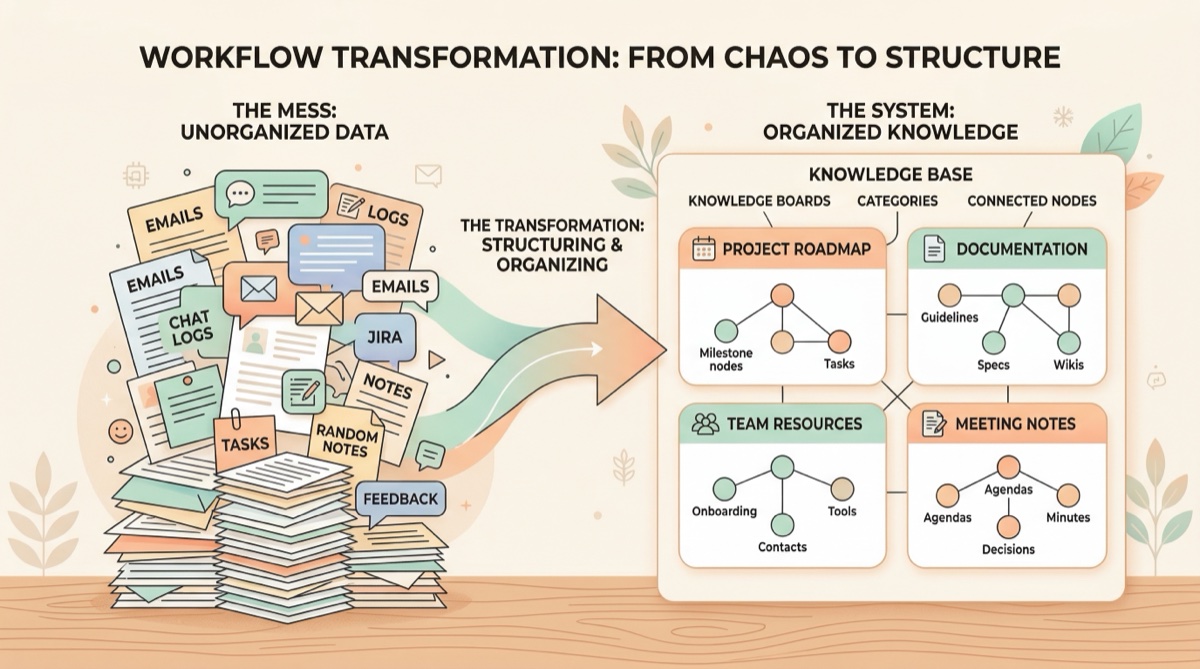

The core concept of MemOS can be summarized in one sentence: Extract Memory from the Prompt and run it as an independent component at the system layer.

The traditional approach is to stuff all conversation history, user preferences, and task context into the Prompt, making the LLM "re-read" all information during each inference. MemOS takes a completely different approach. It inserts a "memory operating system" layer between the LLM and the application, responsible for memory storage, retrieval, updating, and scheduling. The Agent no longer needs to load the full history every time; instead, MemOS intelligently retrieves the most relevant memory fragments into the context based on the current task's semantics.

This architecture brings three direct benefits:

First, Token consumption significantly decreases. Official data from the LoCoMo benchmark shows that MemOS reduces Token consumption by approximately 60.95% compared to traditional full-load methods, with memory Token savings reaching 35.24% 5. A report from JiQiZhiXing mentioned that overall accuracy increased by 38.97% 6. In other words, better results are achieved with fewer Tokens.

Second, cross-session memory persistence. MemOS supports automatic extraction and persistent storage of key information from conversations. When a new session is started next time, the Agent can directly access previously accumulated memories, eliminating the need for the user to re-explain the background. Data is stored locally in SQLite, running 100% locally, ensuring data privacy.

Third, multi-Agent memory sharing. Multiple Agent instances can share memory through the same user_id, enabling automatic context handover. This is a critical capability for building multi-Agent collaborative systems.

The Most Interesting Feature: How Conversations Evolve into Reusable Skills

MemOS's most striking design is its "memory evolution chain."

Most memory systems focus on "storing" and "retrieving": saving conversation history and retrieving it when needed. MemOS adds another layer of abstraction. Conversation content doesn't accumulate verbatim but evolves through three stages:

Stage One: Conversation → Structured Memory. Raw conversations are automatically extracted into structured memory entries, including key facts, user preferences, timestamps, and other metadata. MemOS uses its self-developed MemReader model (available in 4B/1.7B/0.6B sizes) to perform this extraction process, which is more efficient and accurate than directly using GPT-4 for summarization.

Stage Two: Memory → Task. When the system identifies that certain memory entries are associated with specific task patterns, it automatically aggregates them into Task-level knowledge units. For example, if you repeatedly ask the Agent to perform "Python data cleaning," the relevant conversation memories will be categorized into a Task template.

Stage Three: Task → Skill. When a Task is repeatedly triggered and validated as effective, it further evolves into a reusable Skill. This means that problems the Agent has encountered before will likely not be asked a second time; instead, it will directly invoke the existing Skill to execute.

The brilliance of this design lies in its simulation of human learning: from specific experiences to abstract rules, and then to automated skills. The MemOS paper refers to this capability as "Memory-Augmented Generation" and has published two related papers on arXiv 7.

Actual data also confirms the effectiveness of this design. In the LongMemEval evaluation, MemOS's cross-session reasoning capability improved by 40.43% compared to the GPT-4o-mini baseline; in the PrefEval-10 personalized preference evaluation, the improvement was an astonishing 2568% 5.

How Developers Can Quickly Get Started with MemOS

If you want to integrate MemOS into your Agent project, here's a quick start guide:

Step One: Choose a deployment method. MemOS offers two modes. Cloud mode allows you to directly register for an API Key on the MemOS Dashboard, and integrate with a few lines of code. Local mode deploys via Docker, with all data stored locally in SQLite, suitable for scenarios with data privacy requirements.

Step Two: Initialize the memory system. The core concept is MemCube (Memory Cube), where each MemCube corresponds to a user's or an Agent's memory space. Multiple MemCubes can be uniformly managed through the MOS (Memory Operating System) layer. Here's a code example:

``python

from memos.mem_os.main import MOS

from memos.configs.mem_os import MOSConfig

# Initialize MOS

config = MOSConfig.from_json_file("config.json")

memory = MOS(config)

# Create a user and register a memory space

memory.create_user(user_id="your-user-id")

memory.register_mem_cube("path/to/mem_cube", user_id="your-user-id")

# Add conversation memory

memory.add(

messages=[

{"role": "user", "content": "My project uses Python for data analysis"},

{"role": "assistant", "content": "Understood, I will remember this background information"}

],

user_id="your-user-id"

)

# Retrieve relevant memories later

results = memory.search(query="What language does my project use?", user_id="your-user-id")

``

Step Three: Integrate the MCP protocol. MemOS v1.1.2 and later fully support the Model Context Protocol (MCP), meaning you can use MemOS as an MCP Server, allowing any MCP-enabled IDE or Agent framework to directly read and write external memories.

Common pitfalls reminder: MemOS's memory extraction relies on LLM inference. If the underlying model's capability is insufficient, memory quality will suffer. Developers in the Reddit community have reported that when using small-parameter local models, memory accuracy is not as good as calling the OpenAI API 8. It is recommended to use at least a GPT-4o-mini level model as the memory processing backend in production environments.

In daily work, Agent-level memory management solves the problem of "how machines remember," but for developers and knowledge workers, "how humans efficiently accumulate and retrieve information" is equally important. YouMind's Board feature offers a complementary approach: you can save research materials, technical documents, and web links uniformly into a knowledge space, and the AI assistant will automatically organize them and support cross-document Q&A. For example, when evaluating MemOS, you can clip GitHub READMEs, arXiv papers, and community discussions to the same Board with one click, then directly ask, "What are the benchmark differences between MemOS and Mem0?" The AI will retrieve answers from all the materials you've saved. This "human + AI collaborative accumulation" model complements MemOS's Agent memory management well.

Horizontal Comparison of Mainstream Agent Memory Solutions

Since 2025, several open-source projects have emerged in the Agent memory space. Here's a comparison of four of the most representative solutions:

Tool | Best Use Case | Open Source License | Core Advantages | Main Limitations |

|---|---|---|---|---|

Complex Agents requiring memory evolution and Skill reuse | Apache 2.0 | Memory evolution chain, SOTA benchmark, MCP support | Heavier architecture, potentially over-engineered for small projects | |

Quickly adding a memory layer to existing Agents | Apache 2.0 | One-line code integration, cloud-hosted, rich ecosystem | Coarser memory granularity, no Skill evolution support | |

Long-term memory for enterprise-grade conversational systems | Commercial + Open Source | Automatic summarization, entity extraction, enterprise-grade security | Limited features in open-source version, full features require payment | |

Letta (formerly MemGPT) | Research projects and custom memory architectures | Apache 2.0 | Highly customizable, strong academic background | High barrier to entry, smaller community size |

A Zhihu article from 2025, "AI Memory System Horizontal Review," performed a detailed benchmark reproduction of these solutions, concluding that MemOS performed most stably on evaluation sets like LoCoMo and LongMemEval, and was the "only Memory OS with consistent official evaluations, GitHub cross-tests, and community reproduction results" 9.

If your need is not Agent-level memory management, but rather personal or team knowledge accumulation and retrieval, YouMind offers another dimension of solutions. Its positioning is an integrated studio for "learning → thinking → creating," supporting saving various sources like web pages, PDFs, videos, and podcasts, with AI automatically organizing them and supporting cross-document Q&A. Compared to Agent memory systems which focus on "making machines remember," YouMind focuses more on "helping people manage knowledge efficiently." However, it should be noted that YouMind currently does not provide Agent memory APIs similar to MemOS; they address different levels of needs.

Selection Advice:

- If you are building complex Agents that require cross-session memory and experience reuse, MemOS is currently the strongest benchmarked choice.

- If you just need to quickly add a memory layer to an existing Agent, Mem0 has the lowest integration cost.

- If you are an enterprise customer and require compliance and security, Zep's enterprise version is worth considering.

- If you are a researcher looking to deeply customize memory architecture, Letta offers the highest flexibility.

FAQ

Q: What is the difference between MemOS and RAG (Retrieval-Augmented Generation)?

A: RAG focuses on retrieving information from external knowledge bases and injecting it into the Prompt, essentially still following a "look up every time, insert every time" pattern. MemOS, on the other hand, manages memory as a system-level component, supporting automatic extraction, evolution, and Skill-ification of memory. The two can be used complementarily, with MemOS handling conversational memory and experience accumulation, and RAG handling static knowledge base retrieval.

Q: Which LLMs does MemOS support? What are the hardware requirements for deployment?

A: MemOS supports calling mainstream models like OpenAI and Claude via API, and also supports integrating local models via Ollama. Cloud mode has no hardware requirements; Local mode recommends a Linux environment, and the built-in MemReader model has a minimum size of 0.6B parameters, which can run on a regular GPU. Docker deployment is out-of-the-box.

Q: How secure is MemOS's data? Where is memory data stored?

A: In Local mode, all data is stored in a local SQLite database, running 100% locally, and is not uploaded to any external servers. In Cloud mode, data is stored on MemOS's official servers. For enterprise users, Local mode or private deployment solutions are recommended.

Q: How high are the Token costs for AI Agents generally?

A: Taking a typical customer service Agent as an example, each interaction consumes approximately 3,150 input Tokens and 400 output Tokens. Based on GPT-4o pricing in 2026, an application with 10,000 daily active users and an average of 5 interactions per user per day would have monthly Token costs between $2,000 and $5,000. Using memory optimization solutions like MemOS can reduce this figure by over 50%.

Q: Besides MemOS, what other methods can reduce Agent Token costs?

A: Mainstream methods include Prompt compression (e.g., LLMLingua), semantic caching (e.g., Redis semantic cache), context summarization, and selective loading strategies. Redis's 2026 technical blog points out that semantic caching can completely bypass LLM inference calls in scenarios with highly repetitive queries, leading to significant cost savings 10. These methods can be used in conjunction with MemOS.

Summary

The AI Agent memory problem is essentially a system architecture problem, not merely a model capability problem. MemOS's answer is to free memory from the Prompt and run it as an independent operating system layer. Empirical data proves the feasibility of this path: Token consumption reduced by 61%, temporal reasoning improved by 159%, and SOTA achieved across four major evaluation sets.

For developers, the most noteworthy aspect is MemOS's "conversation → Task → Skill" evolution chain. It transforms the Agent from a tool that "starts from scratch every time" into a system capable of accumulating experience and continuously evolving. This may be the critical step for Agents to go from "usable" to "effective."

If you are interested in AI-driven knowledge management and information accumulation, you are welcome to try YouMind for free and experience the integrated workflow of "learning → thinking → creating."

References

[1] LLM Context Window Management and Long Context Strategies 2026

[2] Cutting Through the Noise: Smarter Context Management for LLM-Powered Agents

[3] Understanding LLM Cost Per Token: A Practical Guide for 2026

[4] Ranked First in Four Major Evaluation Sets, How MemOS Defines the New Infrastructure of the AI Era

[5] MemOS GitHub Repository: AI Memory OS for LLM and Agent Systems

[7] MemOS: A Memory Operating System for AI Systems

[8] Reddit LocalLLaMA Community: MemOS Discussion Thread

[10] LLM Token Optimization: Cutting Costs and Latency in 2026