Lenny Opens 350+ Newsletter Dataset: How to Integrate It with Your AI Assistant Using MCP

Author

Lynne

Date Published

TL;DR Key Takeaways

- Lenny Rachitsky has made over 350 Newsletter articles and 300+ podcast transcripts available in AI-friendly Markdown format. Free users can access a subset, while paid users get the full collection.

- The dataset comes with an MCP server and a GitHub repository, allowing direct integration with AI tools like Claude Code and Cursor.

- The community has already built 50+ creative projects based on this data, including an RPG game, a parenting website, and a Twitter bot.

- This article provides a complete guide from data acquisition to MCP integration, along with 5 categories of creative application scenarios.

The Newsletter Dataset Behind 1.1 Million Subscribers, Now Open to Everyone

You might have heard the name Lenny Rachitsky. This former Airbnb product lead started writing his Newsletter in 2019 and now boasts over 1.1 million subscribers, generating over $2 million in annual revenue, making it the #1 business Newsletter on Substack 1. His podcast also ranks among the top ten in tech, featuring guests from Silicon Valley's top product managers, growth experts, and entrepreneurs.

On March 17, 2026, Lenny did something unprecedented: he made all his content assets available as an AI-readable Markdown dataset. With 350+ in-depth Newsletter articles, 300+ full podcast transcripts, a complementary MCP server, and a GitHub repository, anyone can now build AI applications using this data 2.

This article will cover the complete contents of this dataset, how to integrate it into your AI tools via the MCP server, 50+ creative projects already built by the community, and how you can leverage this data to create your own AI knowledge assistant. This article is suitable for content creators, Newsletter authors, AI application developers, and knowledge management enthusiasts.

What Lenny's Dataset Contains: A Complete Archive of Top-Tier Product Knowledge

This is not a simple "content transfer." Lenny's dataset is meticulously organized and specifically designed for AI consumption scenarios.

In terms of data scale, free users can access a starter pack of 10 Newsletter articles and 50 podcast transcripts, and connect to a starter-level MCP server via LennysData.com. Paid subscribers, on the other hand, gain access to the complete 349 Newsletter articles and 289 podcast transcripts, plus full MCP access and a private GitHub repository 3.

In terms of data format, all files are in pure Markdown format, ready for direct use with Claude Code, Cursor, and other AI tools. The index.json file in the repository contains structured metadata such as titles, publication dates, word counts, Newsletter subtitles, podcast guest information, and episode descriptions. It's worth noting that Newsletter articles published within the last 3 months are not included in the dataset.

In terms of content quality, this data covers core areas such as product management, user growth, startup strategies, and career development. Podcast guests include executives and founders from companies like Airbnb, Figma, Notion, Stripe, and Duolingo. This is not randomly scraped web content, but a high-quality knowledge base accumulated over 7 years and validated by 1.1 million people.

Why This Matters: Content Creators' Data Awakening

The global AI training dataset market reached $3.59 billion in 2025 and is projected to grow to $23.18 billion by 2034, with a compound annual growth rate of 22.9% 4. In this era where data is fuel, high-quality, niche content data has become extremely scarce.

Lenny's approach represents a new creator economy model. Traditionally, Newsletter authors protect content value through paywalls. Lenny, however, does the opposite: he opens his content as "data assets," allowing the community to build new value layers on top of it. This has not only not diminished his paid subscriptions (in fact, the dataset's spread has attracted more attention) but has also created a developer ecosystem around his content.

Compared to other content creators' practices, this "content as API" approach is almost unprecedented. As Lenny himself said, "I don't think anyone has done anything like this before." 2 The core insight of this model is: when your content is good enough and your data structure is clear enough, the community will help you create value you never even imagined.

Imagine this scenario: you're a product manager preparing a presentation on user growth strategies. Instead of spending hours sifting through Lenny's historical articles, you can directly ask an AI assistant to retrieve all discussions about "growth loops" from 300+ podcast episodes and automatically generate a summary with specific examples and data. This is the efficiency leap brought by structured datasets.

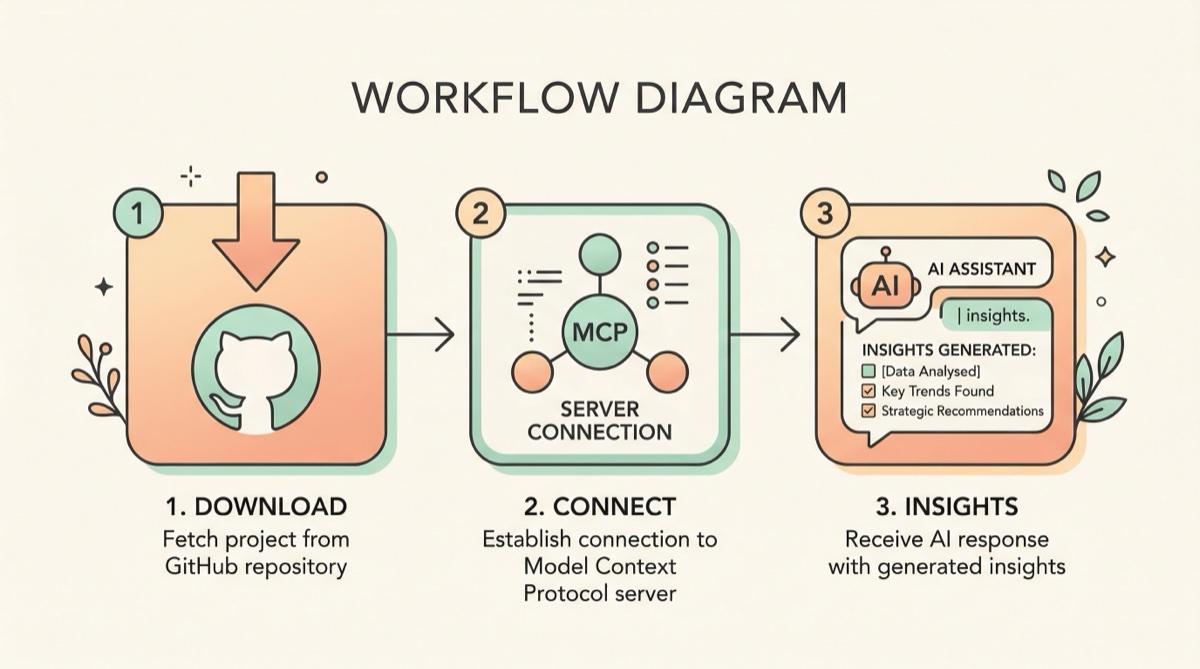

Three Steps to Integration: From Data Acquisition to MCP Server Connection

Integrating Lenny's dataset into your AI workflow is not complicated. Here are the specific steps.

Step One: Obtain the Data

Go to LennysData.com and enter your subscription email to get a login link. Free users can download the starter pack ZIP file or directly clone the public GitHub repository:

``plaintext

git clone https://github.com/LennysNewsletter/lennys-newsletterpodcastdata.git

``

Paid users can log in to get access to the private repository containing the full dataset.

Step Two: Connect to the MCP Server

MCP (Model Context Protocol) is an open standard introduced by Anthropic, allowing AI models to access external data sources in a standardized way. Lenny's dataset provides an official MCP server, which you can configure directly in Claude Code or other MCP-supported clients. Free users can use the starter-level MCP, while paid users get MCP access to the full data.

Once configured, you can directly search and reference all of Lenny's content in your AI conversations. For example, you can ask: "Among Lenny's podcast guests, who discussed PLG (Product-Led Growth) strategies? What were their core insights?"

Step Three: Choose Your Building Tool

Once you have the data, you can choose different building paths based on your needs. If you are a developer, you can use Claude Code or Cursor to build applications directly based on the Markdown files. If you are more inclined towards knowledge management, you can import this content into your preferred knowledge base tool.

For example, you can create a dedicated Board in YouMind and batch-save links to Lenny's Newsletter articles there. YouMind's AI will automatically organize this content, and you can ask questions, retrieve, and analyze the entire knowledge base at any time. This method is particularly suitable for creators and knowledge workers who don't code but want to efficiently digest large amounts of content with AI.

A common misconception to note: do not try to dump all data into one AI chat window at once. A better approach is to process it in batches by topic, or let the AI retrieve it on demand via the MCP server.

What the Community Has Built: 50+ Creative Project Case Studies

Lenny previously only released podcast transcript data, and the community has already built over 50 projects. Below are 5 categories of the most representative applications.

Gamified Learning: LennyRPG. Product designer Ben Shih transformed 300+ podcast transcripts into a Pokémon-style RPG game, LennyRPG. Players encounter podcast guests in a pixelated world and "battle" and "capture" them by answering product management questions. Ben used the Phaser game framework, Claude Code, and the OpenAI API to complete the entire development, from concept to launch, in just a few weeks 2.

Cross-Domain Knowledge Transfer: Tiny Stakeholders. Tiny Stakeholders, developed by Ondrej Machart, applies product management methodologies from the podcasts to parenting scenarios. This project demonstrates an interesting characteristic of high-quality content data: good frameworks and mental models can be transferred across domains.

Structured Knowledge Extraction: Lenny Skills Database. The Refound AI team extracted 86 actionable skills from the podcast archives, each with specific context and source citations 5. They used Claude for preprocessing and ChromaDB for vector embeddings, making the entire process highly automated.

Social Media AI Agent: Learn from Lenny. @learnfromlenny is an AI Agent running on X (Twitter) that answers users' product management questions based on the podcast archives, with each reply including the original source.

Visual Content Re-creation: Lenny Gallery. Lenny Gallery transforms the core insights of each podcast episode into beautiful infographics, turning an hour-long podcast into a shareable visual summary.

The common characteristic of these projects is that they are not simple "content transfers," but rather create new forms of value based on the original data.

Tool Comparison: How to Choose Your Newsletter Data Management Solution

Facing a large-scale content dataset like Lenny's, different tools are suitable for different use cases. Below is a comparison of mainstream solutions:

Tool | Best Use Case | Free Version | Core Advantages |

|---|---|---|---|

AI knowledge management for non-technical users | ✅ | Multi-source import (URL/PDF/podcast) + AI Q&A, supports Board publishing and sharing | |

Developers building applications directly with code | ✅ (with limits) | Native MCP support, strong code generation capabilities | |

Developers integrating AI within their IDE | ✅ (with limits) | Native Markdown file support, suitable for large projects | |

Single-session research and document Q&A | ✅ | Google ecosystem integration, audio overview feature | |

Reading highlights and note management | ❌ | Powerful highlighting and annotation system |

If you are a developer, Claude Code + MCP server is the most direct path, allowing real-time querying of the full data in conversations. If you are a content creator or knowledge worker who doesn't want to code but wishes to digest this content with AI, YouMind's Board feature is more suitable: you can batch import article links and then use AI to ask questions and analyze the entire knowledge base. YouMind is currently more suitable for "collect → organize → AI Q&A" knowledge management scenarios but does not yet support direct connection to external MCP servers. For projects requiring deep code development, Claude Code or Cursor is still recommended.

FAQ

Q: Is Lenny's dataset completely free?

A: Not entirely. Free users can access a starter pack containing 10 Newsletters and 50 podcast transcripts, as well as starter-level MCP access. The complete 349 articles and 289 transcripts require a paid subscription to Lenny's Newsletter (approximately $150 annually). Articles published within the last 3 months are not included in the dataset.

Q: What is an MCP server? Can regular users use it?

A: MCP (Model Context Protocol) is an open standard introduced by Anthropic in late 2024, allowing AI models to access external data in a standardized way. It is currently primarily used through development tools like Claude Code and Cursor. If regular users are not familiar with the command line, they can first download the Markdown files and import them into knowledge management tools like YouMind to use AI Q&A features.

Q: Can I use this data to train my own AI model?

A: The use of the dataset is governed by the LICENSE.md file. Currently, the data is primarily designed for contextual retrieval in AI tools (e.g., RAG), rather than direct use for model fine-tuning. It is recommended to carefully read the license agreement in the GitHub repository before use.

Q: Besides Lenny, have other Newsletter authors released similar datasets?

A: Currently, Lenny is the first leading Newsletter author to open up full content in such a systematic way (Markdown + MCP + GitHub). This approach is unprecedented in the creator economy but may inspire more creators to follow suit.

Q: What is the deadline for the creation challenge?

A: The deadline for the creation challenge launched by Lenny is April 15, 2025. Participants need to build projects based on the dataset and submit links in the Newsletter comment section. Winners will receive a free one-year Newsletter subscription.

Summary

Lenny Rachitsky's release of 350+ Newsletter articles and 300+ podcast transcript datasets marks a significant turning point in the content creator economy: high-quality content is no longer just something to be read; it is becoming a programmable data asset. Through the MCP server and structured Markdown format, any developer and creator can integrate this knowledge into their AI workflow. The community has already demonstrated the immense potential of this model with over 50 projects.

Whether you want to build an AI-powered knowledge assistant or more efficiently digest and organize Newsletter content, now is a great time to act. You can go to LennysData.com to get the data, or try using YouMind to import the Newsletter and podcast content you follow into your personal knowledge base, letting AI help you complete the entire closed loop from information gathering to knowledge creation.

References

[1] The World's Biggest Newsletters in 2026

[3] Lenny's Newsletter and Podcast Data GitHub Repository

[4] AI Training Dataset Market Size and Trends Report

[5] How to Build a Skills Database from Lenny's Podcast