ClawFeed Hands-on Review: How AI Compresses a 5,000-Person Feed into 20 Essential Highlights

Author

Leah

Date Published

TL; DR Key Takeaways

- ClawFeed is an open-source AI information feed management tool that uses a recursive summary mechanism (4 hours → Day → Week → Month) to compress thousands of Twitter/RSS updates into 20 essential highlights per day.

- 10-day test data shows: Daily information processing time dropped from 2 hours to 5 minutes, with a noise filtration rate of 95% and memory usage under 50MB.

- Summaries maintain the "@username + original quote" format rather than abstract generalizations, ensuring information is traceable and verifiable.

Spending 2 Hours a Day on Twitter—Are You Actually "Gaining Information"?

You follow 500, 1,000, or even 5,000 Twitter accounts. Every morning you open your timeline, and hundreds or thousands of tweets come rushing in. You scroll through the screen, trying to find those few truly important messages. Two hours pass, and you've gathered a pile of fragmented impressions, yet you can't quite say what actually happened in the AI field today.

This isn't an isolated case. According to 2025 data from Statista, global users spend an average of 141 minutes on social media every day 1. In Reddit communities like r/socialmedia and r/Twitter, "how to efficiently filter valuable content from Twitter feeds" is a recurring high-frequency question. One user's description is typical: "Every time I log into X, I spend too much time scrolling the feed trying to find things that are actually useful." 2

This article is for content creators, AI tool enthusiasts, and developers focused on efficiency. We will deeply deconstruct the engineering solution of an open-source project, ClawFeed: how it uses AI Agents to read your entire feed and achieves a 95% noise filtration rate through recursive summarization.

The Core Dilemma of Twitter Feed Management: Exponential Growth vs. Linear Attention

Traditional Twitter information management solutions mainly fall into three categories: manually filtering follow lists, using Twitter Lists for grouping, or using multi-column browsing with TweetDeck. The common problem with these methods is that they essentially still rely on human attention to perform information filtering.

When you follow 200 people, Lists are barely manageable. But when the following count exceeds 1,000, the volume of information grows exponentially, and the efficiency of manual browsing drops sharply. A blogger on Zhihu shared their experience: even after carefully selecting 20 high-quality AI information source accounts, it still takes a significant amount of time every day to browse and discern content 3.

The root of the problem is that human attention is linear, while the growth of information feeds is exponential. You cannot solve the problem by "following fewer people," because the breadth of information sources directly determines the quality of your coverage. What is truly needed is a middle layer—an AI agent capable of full-scale reading and intelligent compression.

This is exactly what ClawFeed aims to solve.

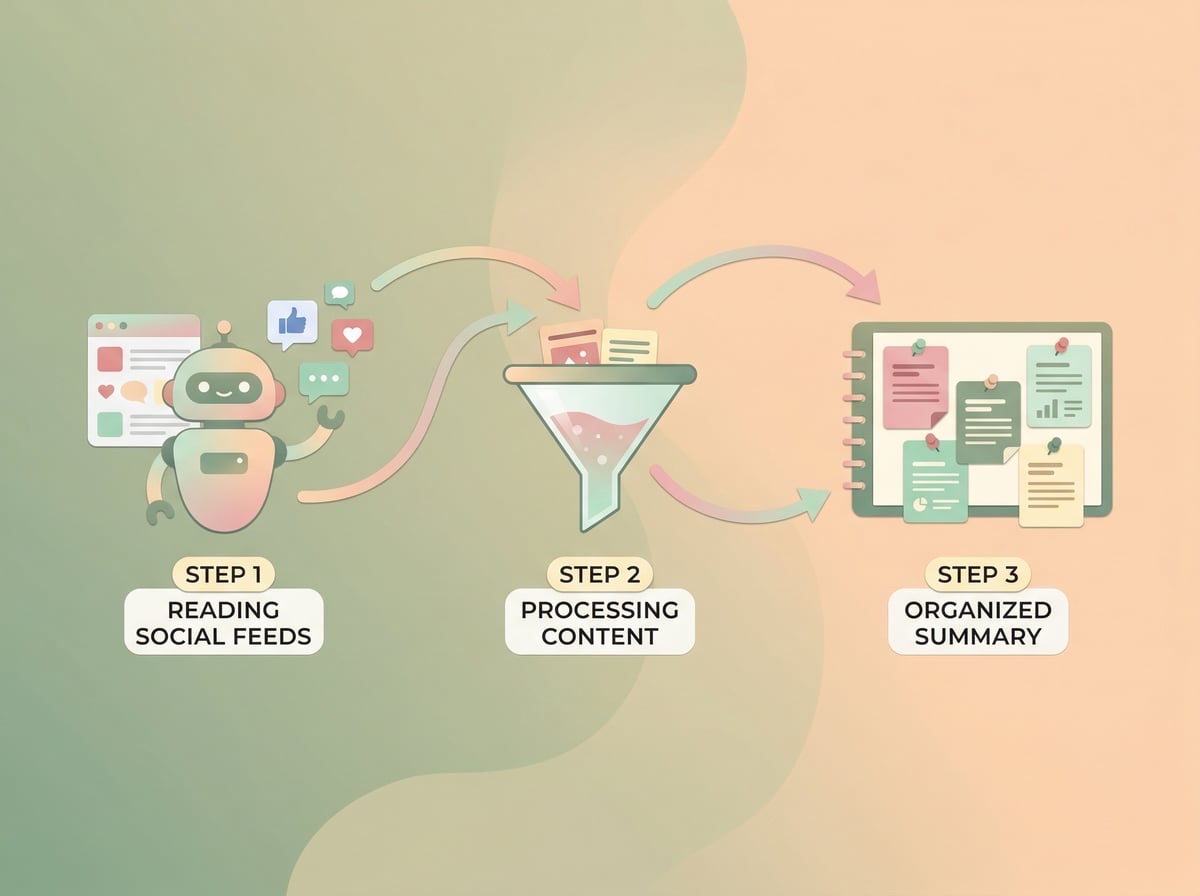

Recursive Summarization: The Core Technical Architecture of ClawFeed

ClawFeed's core design philosophy can be summarized in one sentence: Let an AI Agent read everything for you, and then use multi-layer recursive summarization to gradually compress information density.

Specifically, it employs a four-frequency recursive summary mechanism:

- 4-Hour Summary: The AI reads all information sources (Twitter, RSS, HackerNews, Reddit, GitHub Trending, etc.) every 4 hours to generate the first layer of structured summaries.

- Daily Report: Compresses multiple 4-hour summaries from the day to extract the most important information.

- Weekly Report: Aggregates a week's worth of daily reports to identify trends and persistent topics.

- Monthly Report: Distills monthly insights from weekly reports to form a macro perspective.

The brilliance of this design is that each layer of summary is based on the output of the previous layer, rather than re-processing the raw data. This means the AI's workload is controllable and does not expand linearly as the number of information sources increases. The final result: a feed from 5,000 people is compressed into about 20 essential summaries per day.

Regarding summary format, ClawFeed made a notable design decision: adhering to the "@username + original quote" format instead of generating abstract summaries. This means every summary preserves the source and original phrasing, allowing readers to quickly judge credibility and jump to the original text for deep reading with one click.

Engineering Implementation: Minimalist Technical Trade-offs

ClawFeed's tech stack choice reflects a restrained engineering philosophy. The entire project has zero framework dependencies, using only Node.js native HTTP modules plus better-sqlite3, with a runtime memory footprint of less than 50MB. This is remarkably clear-headed in an era where projects often reflexively pull in Express, Prisma, and Redis.

Choosing SQLite over PostgreSQL or MongoDB means deployment is extremely simple. A single Docker command gets it running:

``bash

docker run -d -p 8767:8767 -v clawfeed-data:/app/data kevinho/clawfeed

``

The project is released as both an OpenClaw Skill and a Zylos Component, meaning it can run independently or be called as a module within a larger AI Agent ecosystem. OpenClaw automatically detects and loads the skill via the SKILL.md file in the project, allowing the Agent to generate summaries via cron, serve a Web dashboard, and handle bookmarking commands.

In terms of source support, ClawFeed covers Twitter/X user updates, Twitter Lists, RSS/Atom feeds, HackerNews, Reddit subreddits, GitHub Trending, and arbitrary web scraping. It also introduces the concept of Source Packs, where users can package their curated information sources to share with the community, allowing others to gain the same coverage with a one-click installation.

Test Data and Practical Guide: From Installation to Daily Use

According to 10-day test data released by the developer, ClawFeed's core performance metrics are as follows:

Metric | Before Use | After Use | Change |

|---|---|---|---|

Daily Processing Time | 2 Hours | 5 Minutes | 96% Decrease |

Noise Filtration Rate | Manual Judgment | 95% Auto-filtered | Significant Improvement |

Memory Usage | N/A | < 50MB | Extremely Low Resource Consumption |

Source Coverage | Manual Browsing | Full Auto-reading | No Omissions |

The fastest way to get started with ClawFeed is via a one-click installation through ClawHub:

``bash

clawhub install clawfeed

``

Alternatively, you can deploy manually: clone the repository, install dependencies, configure the .env file, and start the service. The project supports Google OAuth multi-user login, allowing each user to have independent sources and bookmark lists after configuration.

The recommended daily workflow is as follows: Spend 5 minutes in the morning browsing the daily summary. Use the "Mark & Deep Dive" feature for items of interest, and the AI will perform a more in-depth analysis of the bookmarked content. Spend 10 minutes on the weekend reading the weekly report to grasp the week's trends. At the end of the month, read the monthly report to form a macro understanding.

If you want to further consolidate this essential information, you can use ClawFeed's summary output in conjunction with YouMind. ClawFeed supports RSS and JSON Feed outputs, allowing you to save these summary links directly in a YouMind Board. You can then use YouMind's AI Q&A feature to perform cross-period analysis on summaries over time. For example, ask "What were the three most important changes in the AI programming tools field over the past month?", and it can provide an evidence-based answer based on all the summaries you've accumulated. YouMind's Skills feature also supports setting up scheduled tasks to automatically fetch ClawFeed's RSS output and generate weekly knowledge reports.

Comparison with Similar Tools: Who is ClawFeed for?

There are many tools on the market designed to solve information overload, but their focus varies:

Tool | Best Scenario | Free Version | Core Advantage |

|---|---|---|---|

Automated recursive summaries for multi-source feeds | ✅ Fully Open Source | Four-frequency recursive compression, traceable info | |

Personal AI reading assistant | ✅ | Multi-source aggregation + custom AI prompt templates | |

Information consolidation and knowledge creation | ✅ | Board knowledge spaces + AI Q&A + multi-model support | |

Twitter Lists | Manual grouped browsing | ✅ | Native feature, no extra tools required |

Social media management and content discovery | ❌ | Cross-platform management + influence tracking |

The ideal user profile for ClawFeed is: content creators and developers who follow a large number of sources, need full coverage but lack the time to browse every item, and possess basic technical skills (able to run Docker or npm). Its limitation lies in the need for self-deployment and maintenance, which presents a barrier for non-technical users. If you prefer a "Save + Deep Research + Creation" workflow, YouMind's Board and Craft editor would be more suitable choices.

FAQ

Q: What information sources does ClawFeed support? Is it only for Twitter?

A: Not just Twitter. ClawFeed supports Twitter/X user updates and lists, RSS/Atom feeds, HackerNews, Reddit subreddits, GitHub Trending, arbitrary web scraping, and can even subscribe to the summary outputs of other ClawFeed users. Through the Source Packs feature, you can also import curated source collections shared by the community with one click.

Q: How is the quality of the AI summaries? Will it miss important information?

A: ClawFeed uses the "@username + original quote" format, preserving the source and original phrasing to avoid information distortion caused by abstract AI generalizations. The recursive summary mechanism ensures every piece of information is processed by the AI at least once. The tested 95% noise filtration rate means most low-value content is effectively filtered while high-value information is retained.

Q: What technical conditions are required to deploy ClawFeed?

A: The minimum requirement is a server capable of running Docker or Node.js. One-click installation via ClawHub is the simplest method, or you can manually clone the repo and run npm install and npm start. The entire service uses less than 50MB of memory, so even a base-tier cloud server can run it.

Q: Is ClawFeed free?

A: It is completely free and open-source under the MIT license. You are free to use, modify, and distribute it. The only potential cost comes from AI model API fees (used for generating summaries), depending on the model you choose and the number of information sources.

Q: How can I connect ClawFeed summaries with other knowledge management tools?

A: ClawFeed supports RSS and JSON Feed formats, meaning any tool that supports RSS can connect to it. You can use Zapier, IFTTT, or n8n to automatically push summaries to Slack, Discord, or email, or directly subscribe to ClawFeed's RSS output in knowledge management tools like YouMind for long-term consolidation.

Summary

The essence of information anxiety is not that there is too much information, but rather the lack of a reliable filtering and compression mechanism. ClawFeed provides an engineered solution through four-frequency recursive summarization (4 hours → Day → Week → Month), compressing daily processing time from 2 hours to 5 minutes. Its "@username + original quote" format ensures traceability, and its zero-framework tech stack keeps deployment and maintenance costs to a minimum.

For content creators and developers, efficiently acquiring information is only the first step. The more critical part is transforming that information into your own knowledge and creative material. If you are looking for a complete "Information Acquisition → Knowledge Consolidation → Content Creation" workflow, try using YouMind to capture ClawFeed's output, turning daily essential summaries into your personal knowledge base for instant retrieval, questioning, and creation.

References

[1] Daily time spent on social networking worldwide (2025)

[2] How do you filter valuable content on X (Twitter)? (Reddit Discussion)

[3] High-quality AI Information Sources I Frequently Watch: 20 Accounts on Twitter X (Zhihu)